The basics

Responsible humanitarian technology development means an inclusive and participatory approach to the design, process, delivery and evaluation of digital solutions.

This section brings together the foundational considerations that should guide the responsible design, development, and use of digital technology in humanitarian action. Rather than offering prescriptive solutions, this section provides a shared starting point for reflection, helping teams examine how decisions about technology affect people, communities, and power dynamics throughout its lifecycle.

By engaging with these themes, users are invited to build a common understanding of responsibility, ethics, and consent, creating a solid basis for more informed, inclusive, and context-sensitive decision-making before moving into more specific approaches or tools.

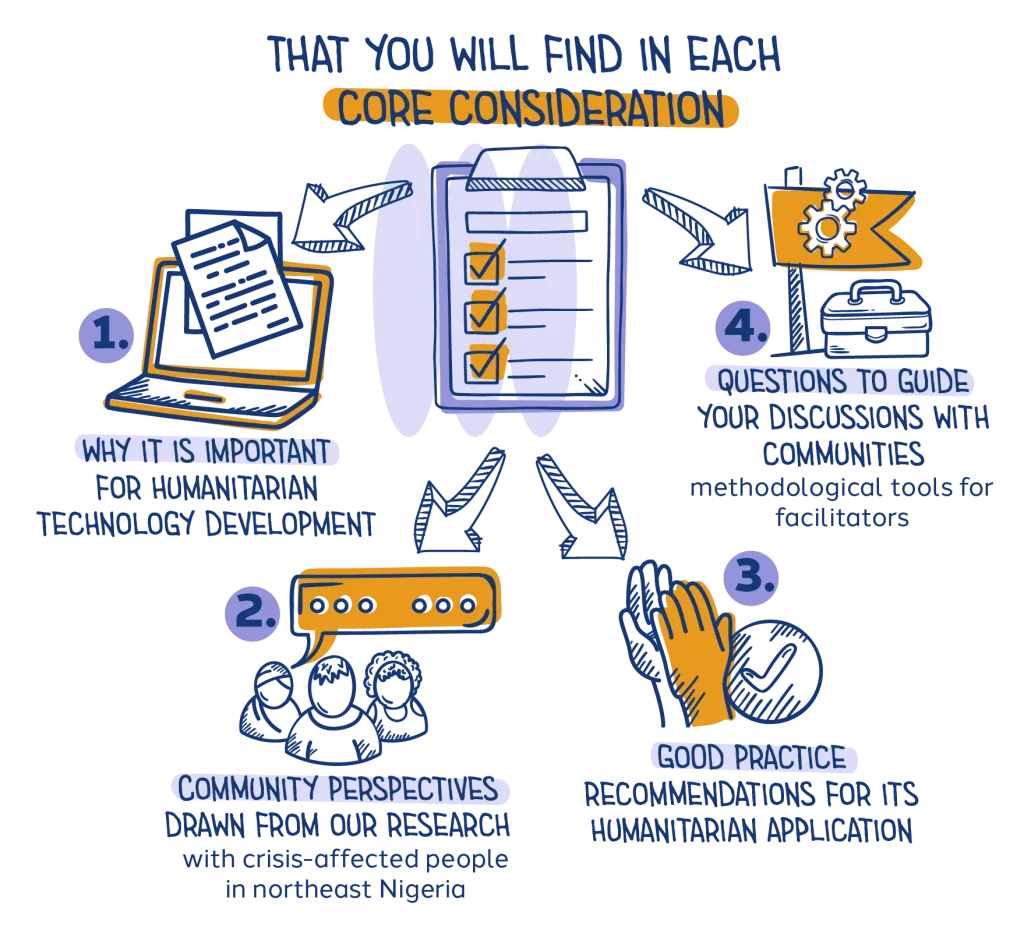

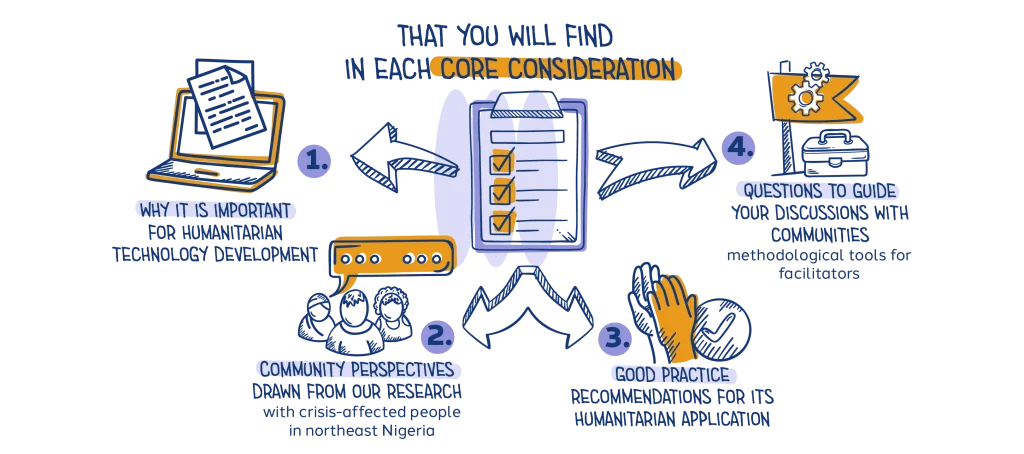

Core considerations

Throughout the process, a number of core considerations will affect the success and sustainability of humanitarian deployments of digital technology. Whether crisis-affected people accept and use digital tools will depend largely part on how far the design process builds trust, invests in meaningful two-way communication, supports people’s digital skills and knowledge, and provides for data protection and informed consent, and how inclusive it is.

Trust

Trust is not automatic – it must be built, earned, and maintained through transparency, engagement, and accountability.

Trust is one of the most critical factors in the successful deployment and adoption of digital technology in humanitarian settings. Trust must be built and maintained through consistent engagement, transparency, and ethical practices. Trust is not static: it is continuously shaped by humanitarian organizations actions, interactions and decisions, and by a changing environment. Without trust, even the most well-designed technological solutions risk rejection or limited adoption.

By embedding trust-building efforts into every stage of technology deployment, humanitarian actors can increase community acceptance, ensure ethical technology use, and ultimately improve the effectiveness of humanitarian interventions.

- The crisis-affected people we talked to in northeast Nigeria told us they trust humanitarian organisations that they know, that have supported communities over the last decade and have established a trusting relationship. People also emphasized that they would not trust organisations or private corporations that they don’t know.

- Despite the general trust in humanitarian organisations, people were very keen to be consulted and included in the decision-making process, including being provided with information on the risks and benefits of a potential digital solution.

- They stressed that to feel comfortable using a digital tool, they would need to know that community leaders and other influential people they trust approve of it.

Good practices for building trust

1. Engage in early, active, and ongoing dialogue

- Engage a diverse range of community members, including people in marginalised or overlooked groups, through public discussions and private conversations to fully understand their perspectives and get their suggestions.

- Clearly explain:

- What the problem is that technology could help to solve;

- Technology options that could be used to address the problem;

- How each technology option could work and the impact it could have;

- How community feedback will shape decisions about what technology is used and how it is developed.

- Don’t hoard knowledge: the principles of localization and accountability to affected people imply giving crisis-affected people the information they need to have a say in decision making.

- Maintain open and ongoing communication, updating communities about challenges as well as successes to reinforce transparency and credibility.

2. Expand community participation by expanding conversations and clear and transparent processes

Work with communities to:

Refine the purpose of the tool and the development process;

Identify potential risks and limitations;

Discuss who benefits and why, and arrive together at realistic expectations;

Discuss who might not yet be included and how they could be involved in developing, and possibly using, the tool.

Acknowledge that no digital tool can fully meet everyone’s needs.

Be open about stakeholder roles and responsibilities. Explain which organisations, companies or agencies are or might be involved in the process.

Listen to community concerns and address fears with honesty and humility, even if you cannot fully resolve every challenge.

3. Foster community leadership and local engagement

Actively involve local leaders, both formal and informal.

Seek guidance from local organisations and grassroots groups, as they often best understand the cultural, social, and political context.

Engage trusted community figures like elders, religious leaders, media personalities, or respected advocates to help communicate the purpose and benefits of the digital tool.

Use familiar channels like call-in radio programmes to engage community members, answer their questions and correct rumours or misinformation.

Work toward shared ownership of the technology solution, ensuring that communities feel they are part of the process, not just passive recipients.

Questions relevant to trust to discuss with crisis-affected people

Trust is not assumed—it is built through dialogue, transparency, and meaningful participation. The set of questions available for download below is designed to support open conversations with crisis-affected people about their expectations, concerns, and conditions for engaging with digital technology. Rather than seeking simple answers, these questions help surface what makes technology feel safe, useful, or risky from a community perspective, who is trusted to represent others, and which voices may be missing from decision-making processes.

They also encourage reflection on how information is shared, how often updates are needed, and which communication channels are trusted locally, laying the groundwork for more accountable, inclusive, and trust-based use of technology.

Meaningful two-way communication

Clear and transparent communication in languages that people feel comfortable with ensures that communities understand the purpose of any new tool, how it works, and how they can engage with it.

Meaningful two-way communication is a critical component in the successful deployment of digital technology in humanitarian action. Ensuring that communities are well-informed and engaged at every stage of a project—and that they can voice their preferences, needs, and concerns— builds trust, manages expectations, and enhances the likelihood of adoption and sustainability. Clear, transparent, and inclusive communication strategies can prevent misunderstandings and foster community ownership of digital solutions.

Dialogue is key throughout the process to ensure that the perspectives of different sections of the population are taken on board. This is particularly important in the early stages of identifying needs, selecting the appropriate digital tool, and scoping how it will be developed and deployed.

The crisis-affected people we talked with in northeast Nigeria stressed the need for clear and easily understandable communication on digital tools, in local languages and without technological or humanitarian jargon.

People often prefer to use different languages for spoken and written communication.

People with less access to and experience of digital technology emphasized the need for detailed communication on the tool that is developed. They called for explanations not only on risks and benefits, but also on each step of the process of deciding, designing, developing and deploying the tool, who would be involved and how, and the purpose and objectives.

Most people would prefer face-to-face communication in their local languages so they are better able to ask questions.

People would prefer to have regular opportunities and a direct channel to give feedback, express concerns, and ask questions.

Good practices for establishing meaningful two-way communication

1. Communicate in ways that are easy to understand

Use local languages, not just the dominant language, when communicating with crisis-affected people to ensure that people in marginalized groups are included and have ways to get updates, give feedback and ask questions.

Use audio, video, and pictorial formats to share information that can be understood by a broad audience with varying literacy levels.

Use plain language to break down complex technical concepts. Avoid jargon and ensure that messages are easy to understand, particularly for communities with varying literacy levels.

Share simple examples that resonate with the local context to make the technology more accessible and understandable.

2. Communicate through trusted channels

Identify and use communication methods and channels that are already trusted within the community.

Partner with local influencers, community leaders, and respected organizations to disseminate information.

Use multiple communication channels that people say they prefer, such as radio, community meetings, social media, and face-to-face interactions, to reach different sections of the population.

Use storytelling, testimonials, and case studies from successful interventions to illustrate the potential uses and benefits of the technology.

3. Encourage and enable continuous feedback

Create opportunities for communities to ask questions, voice concerns, and provide feedback. Actively listen and respond to community concerns, even if they fall outside the project’s scope, to foster trust and credibility.

Make it clear that this is an ongoing process. Share progress, challenges, and setbacks to keep people informed and expectations realistic.

Encourage continuous feedback from all community members, whether they are enthusiastic or skeptical, so that the tool can be improved and adapted to better suit their needs. Close the feedback loop by letting individuals and communities know how their feedback was incorporated, or why it wasn’t.

Questions relevant for meaningful two-way communication to discuss with crisis-affected people

Meaningful two-way communication depends on understanding how people communicate in their daily lives, which languages they use and prefer, and which channels are accessible and trusted within the community. The set of questions available for download below supports conversations that help identify communication barriers, inclusion gaps, and opportunities for participation, particularly for people who may need additional outreach or support to engage.

By exploring past experiences with providing feedback and the practical realities of access to devices and connectivity, these questions aim to inform communication approaches that are responsive, inclusive, and designed to enable genuine exchange rather than one-way information sharing.

Support for digital skills and understanding

Crisis-affected people need an understanding of digital technology to be able to meaningfully engage in communication and make informed decisions about digital tools, especially those that could directly impact their lives.

As humanitarian use of digital tools increases, including for registration and communication, it is important that people receiving assistance can access those tools safely and effectively. However, familiarity with digital technology varies widely, and many people affected by crises face challenges learning about and using technology.

Comprehensive digital skills training is not always feasible in an emergency, particularly in natural disasters. But humanitarians can still be accountable to affected people by telling them how technology will be used in a humanitarian response.

While most of the crisis-affected people we talked to had limited experience of using digital technology, digital access is a deeply gendered issue in northeast Nigeria. Women often use only simple feature phones, if they use any phone at all, and have little experience using smartphones or accessing the Internet.

People’s lack of digital experience and knowledge resulted in two different reactions. Some voiced a fear of digital technology and were not confident about asking questions and learning how to use digital tools. Others were enthusiastic about digital technology, but unaware of its potential risks and harms.

Women especially emphasized the need for digital skills training, both to understand the potential risks and benefits of using a digital tool and to know how to do so. They also stressed the importance of training to enable them to give meaningful consent.

Good practices for promoting digital skills and understanding

1. Explain the implications of humanitarian digital solutions that are not used directly by affected people:

Communicate how digital tools, such as those using algorithmic decision-making, impact aid access and delivery, including their potential for bias and automated exclusion.

Explain what personal identifiable data is, what personal data is collected, why, and how it is used. Educate communities on their rights regarding digital consent, privacy, and opting out. Explain the role of third-party technology providers in data handling and the potential associated risks.

Use visual guides, audio resources, and community meetings to explain technology in ways that are easy and convenient for affected people.

2. Provide guidance on how to use the digital tool:

Train crisis-affected people on using the tool. Ensure wide access to the tool by providing training in a range of formats, including audio and video tutorials with sign language, visual guides and interactive workshops. Ensure training includes practical exercises so people gain hands-on experience.

Promote gender equality in digital skills. Women and girls in many communities face additional challenges when it comes to accessing and using digital technology. Co-designing gender-sensitive programs and initiatives with women and girls can help bridge this gap and empower them to participate in the digital world.

Provide cybersecurity guidance, including:

Recognizing online fraud and how and where to report it.

Using strong passwords and two-factor authentication where possible, and securing personal devices.

Adjusting privacy settings on mobile devices and online platforms to limit unnecessary data exposure.

3. Transfer knowledge:

Encourage local ownership of digital tools by training community members to become trainers themselves. Peer learning among adolescents could be effective. Once a number of adolescent trainers is prepared, they can then work with older relatives, friends and neighbours in an intergenerational “each one teach one” process.

Partner with local organizations and educational institutions to integrate digital training into broader knowledge and skill building initiatives.

Questions relevant to digital skills and understanding to discuss with crisis-affected people

Digital skills and understanding shape how people engage with technology, the benefits they can gain from it, and the risks they may face. The set of questions available for download below supports conversations that explore people’s prior experiences with technology, the barriers they encounter in accessing or using digital tools, and how these challenges may differ across the community. It also creates space to discuss perceived risks, ethical concerns, and worries about sharing personal information.

By grounding these discussions in people’s own perspectives, the questions help teams better understand what support, safeguards, and adaptations are needed to ensure technology use is safe, inclusive, and meaningful.

Data protection and security

Protecting crisis-affected people’s data is essential for preventing harm and upholding people’s right to privacy in humanitarian settings.

Humanitarian organizations collect and process sensitive personal data – from biometric IDs to mobile cash transfers – so strong data security and protection is essential. Failure to secure data exposes people to privacy violations, identity theft, discrimination, and surveillance by third parties.

By embedding strong data security and protection measures in humanitarian operations, organizations can safeguard the privacy, dignity, and rights of people needing assistance, ensuring that technology serves humanitarian goals ethically and responsibly.

The crisis-affected people we talked with in northeast Nigeria all stressed that they should be asked for their consent both when digital tools start to be used and when collecting their personal data.

While all participants were aware of risks such as fraud and identity theft, most of the people we talked to – men as well as women – were unaware of the potential consequences.

Everyone we spoke with, including community leaders, said they did not know what questions to ask humanitarians about data protection, data sharing, and data handling before consenting to the deployment of digital tools.

Good practices for data protection and security

1. Minimise data collection and strengthen data security measures:

Collect only the minimum necessary information.

Establish clear data retention policies to ensure that personal data is deleted when no longer needed.

Encrypt personal data both in transit and at rest to prevent unauthorised access.

Use multi-factor authentication and restrict access to sensitive information.

Regularly review data collection practices to ensure they align with privacy and security standards. Conduct regular security audits to identify and fix vulnerabilities.

2. Ensure transparent and informed consent:

Provide clear, accessible explanations of:

What data is collected and why.

Who has access to it (for example, humanitarian agencies, third-party providers, governments).

How long it will be stored and how individuals can request deletion.

Offer opt-in and opt-out mechanisms, ensuring people can refuse data collection without losing access to aid.

Use alternative formats (audio, video, pictorial guides) to ensure consent is fully understood, especially for people with low literacy.

3. Protect people from data misuse:

Prevent unauthorized data-sharing with governments, private companies and third-parties. Apply anonymisation and pseudonymisation techniques to reduce data-related risks.

Train humanitarian staff in good data security practices to prevent accidental breaches and misuse.

Ensure that AI-driven aid systems do not reinforce biases or exclude vulnerable groups.

Align data-security practices with national and global legal frameworks, such as:

The European Union’s GDPR (General Data Protection Regulation) for global data protection principles.

Local data protection laws in countries where humanitarian work is conducted.

Questions relevant to data protection and security to discuss with crisis-affected people

Data protection and security are closely linked to trust, safety, and people’s sense of control over their own information. The set of questions available for download below supports conversations about how individuals feel about sharing personal data, what information they are comfortable or uncomfortable disclosing, and how well they understand what happens to their data once it is collected. It also opens space to discuss transparency around data use and sharing, as well as people’s own practices and needs related to protecting their information when using digital tools.

These discussions in lived experiences, the questions help teams identify expectations, concerns, and safeguards needed to reduce risks and support more informed and respectful data practices.

Inclusiveness

Accessibility is key to supporting inclusion. Intentional design, in collaboration with diverse communities, is the best way to address access barriers linked to literacy, disability, connectivity, and socioeconomic factors.

Humanitarian action will continue to increase its use of digital technology. Improving accessibility is a fundamental consideration that requires careful planning to avoid excluding those who are most marginalised, among them individuals with low literacy levels, disabilities or limited connectivity, or who speak or read minority languages. Accessibility must be built into consultation, design, implementation, and monitoring and correction processes to ensure equitable access to technology.

Crisis-affected people we spoke with in northeast Nigeria described barriers to technology access including literacy levels, language, cost, and cultural restrictions.

People in northeast Nigeria want to be involved in the design and adaptation of technology to ensure that they can use it easily.

They prefer solutions linked to existing platforms that they know and can use confidently. Women proposed linking technology solutions to existing platforms where they discuss and get information on livelihood opportunities in order to increase uptake.

Women emphasized the need for multilingual and audio- and video-based solutions to make technology and knowledge about technology available to people who cannot read.

Good practices for inclusiveness

1. Address diverse needs:

Literacy levels: Use visual, audio, pictorial and simplified text-based communication to accommodate varying literacy levels.

People with disabilities: Implement accessibility features such as screen readers, voice commands, and alternative input and alternative caption methods.

Language and cultural adaptation: Test content in every language and visualizations with the people you intend the information for, to check they understand it the way you intended.

Age-inclusive design for older adults: Consider the needs of older adults and individuals unfamiliar with digital technology so the tools are easier to use.

Age-inclusive design for younger people: Remember also to work with adolescents and consider their needs and skills.

Easy-to-use and intuitive interfaces: Design user-friendly tools with intuitive interfaces that can be used with minimal prior knowledge. Conduct usability testing.

2. Use multiple channels and digital inclusion strategies

Blended approach: Combine no-tech, low-tech, and high-tech solutions to promote broad accessibility. (Examples include posters, community meetings, radio broadcasts, Interactive Voice Response (IVR) systems, SMS, and chatbots.)

Offline availability: Develop digital tools that can function offline for use in areas with poor or patchy connectivity.

Overcoming barriers: Explore options like public technology hubs to address limited smartphone ownership, high data costs, and gendered digital divides.

3. Localize content and use familiar platforms

Community involvement: Engage crisis-affected people as your partners and co-design, test and modify the technology tool to ensure usability and relevance.

Localised content: Adapt content and features (chatbot personas, content, languages, etc.) to reflect people in the contexts where the tool will be used. Figure out how to follow cultural norms while still including women, girls and members of other marginalised groups in communication content.

Familiar platforms: When possible, engage through popular local channels (social media apps, messaging platforms, etc.) to increase uptake.

Questions relevant to inclusiveness to discuss with crisis-affected people

Inclusiveness in digital technology requires understanding who may be excluded, why, and under what conditions. The set of questions available for download below supports conversations that explore how different people experience barriers to using digital tools, including challenges related to disability, age, connectivity, cost, and access to devices. It invites reflection on what design features, formats, or shared solutions could make technology more usable and accessible for a wider range of people.

By grounding discussions in everyday realities, these questions help teams identify practical adaptations that ensure technology acts as a bridge rather than a barrier in humanitarian contexts.

A note on emerging technologies

Emerging technologies in humanitarian action are digital tools and innovations that are relatively new to the sector and still being tested or scaled. They aim to make aid faster, more targeted, and more efficient, but they also come with risks around ethics, data, and access that the sector is still working through. These solutions have the potential to address a wide range of problems. While this makes them attractive for humanitarian use, digital solutions are only effective if they are designed around the problem, not around a particular technology.

Participatory approaches start from the community’s perspective on the problem in order to determine the right solution. If the right solution makes use of emerging technologies, that introduces additional complexity and risks that the intervention will need to address.

The humanitarian sector should therefore use these technologies in as participatory a way as any other digital tool. That implies engaging target audiences, understanding limitations and constraints as well as opportunities, identifying risks and seeking informed consent, adapting to needs and giving communities agency in decision making. The same principles apply, whatever technology is involved.

Examples of emerging technologies that are being explored and used in humanitarian action

Artificial Intelligence (AI): AI systems analyse large datasets to support predictions, classifications, or decisions in humanitarian action.

- AI systems are rapidly evolving and often difficult to explain, audit, or challenge.

- Key risks – Bias, lack of transparency, unclear accountability, and reinforcement of existing inequalities.

Biometrics: Biometric technologies use physical or behavioral traits to identify individuals and manage access to services.

- Biometric data is highly sensitive and irreversible, with long-term impacts that are still uncertain.

- Key risks – Surveillance, exclusion, misuse of data, and loss of control over personal identity.

Blockchain: Distributed ledger technologies used to record transactions or manage identity and aid delivery.

- Governance models are unclear and evidence of sustainable use at scale remains limited.

- Key risks – Inflexibility, unclear responsibility, exclusion of users, and difficulty correcting errors.

Drones and aerial technologies: Drones are unmanned aerial systems used for activities such as mapping, monitoring, assessments, or delivering supplies in humanitarian contexts, particularly in hard-to-reach or crisis-affected areas.

- The use of drones in humanitarian settings is still evolving and raises unresolved legal, ethical, and social questions, especially in fragile or conflict-affected environments where their presence can be misinterpreted.

- Key risks – Perceptions of surveillance or militarisation, risks to privacy and safety, lack of informed community consent, and erosion of trust if the purpose and use of drones are not clearly understood or agreed upon.

Ethical considerations

Understand the ethical implications of technology before deciding how—and whether—to use it.

Before collaborating with people in crisis-affected communities, it is worth understanding some of the ethical pitfalls of digital technology – including the newest innovations, often viewed collectively as emerging technology.

Humanitarians and technology developers can avoid problems by considering the points below.

Power and decision making

Digital solutions are often developed by external actors (governments, corporations, donors), sidelining local communities and the organisations working directly with them.

Who controls and benefits from these technologies?

Accountability and bias

AI-driven decision-making (for instance for predicting famine risk or allocating aid) can be opaque and biased.

Who is accountable if an algorithm or technology causes harm?

Dependency and sustainability

Some digital solutions create long-term dependency on external providers instead of strengthening local capacities.

High costs, maintenance issues, and lack of contextual adaptation can make digital solutions ineffective.

Ethics of experimentation

Humanitarian crises are not testing grounds, yet new technologies are often piloted in these settings without full ethical safeguards.

There is a risk of “technological colonialism,” where solutions are imposed without local agency or oversight.

Informed consent

In humanitarian contexts, informed consent is critical.

Crisis-affected people are often in vulnerable situations where they cannot make free decisions for many reasons, including a fear of losing assistance if they say ‘no’. Whether technology is used for data collection, biometric identification, digital cash transfers, or communication, ensuring ethical and meaningful consent is essential to protect people’s rights, dignity, and safety.

Key considerations when developing consent policies and seeking informed consent:

Understand the technology

Meaningful informed consent depends on a clear understanding of how a technology works and what its use implies for individuals and communities.

This includes knowing what data is collected, how it is stored, processed, and shared, and who may have access to it under different circumstances. It also requires considering how people will interact with the technology in practice, including the devices required and the level of connectivity needed.

Encourage teams to critically examine aspects, identify potential risks, and ensure that consent is based on transparent, accessible, and contextually relevant information.

Opening questions

- What data is collected in the consent process and could it identify an individual?

- How is the data stored and protected?

- How will it be processed, who will have access to it, how will it be shared and in what situations?

- What are the risks to individuals or the community if their data ends up with people who shouldn’t have it?

- What devices and level of connectivity are required?

Understand the people affected by the crisis

Informed consent is only meaningful when it reflects how people understand and experience technology in their own context.

This requires recognising different levels of digital awareness, knowledge, and perceptions of risk and benefit, as defined by the people themselves. It also involves using familiar concepts, appropriate languages, and accessible formats to support conversations about technology.

Invites teams to move beyond assumptions, identify who may face additional barriers, and ensure that consent processes are grounded in the lived realities of diverse individuals and groups within a community.

Opening questions

- What is people’s level of awareness and knowledge of technology and of the potential risks and benefits as they define them?

- What are familiar or relatable concepts that can help explain technical topics?

- What formats should be used to support a conversation around technical topics (audio, illustrations, etc.)?

- What languages should this take place in, using which words and concepts?

- Which individuals or marginalised groups may struggle more?

- Are certain groups of people perhaps wrongly assumed to be unfamiliar with technology?

- Who in a community may have experience of using such applications? Make sure to include adolescents, who are often left out of design activities.

- How do people interact with technology – is sharing phones a common practice, particularly for women? If so, how does that sharing work?

Informed consent is not obtained, it is built!

Communicate

Explain the risks of using the technology being considered, focusing on personal identifiable information.

Validate

Before asking people for their consent, ask questions to check that they truly understand the risks and their rights regarding data, including the right to withdraw their consent.

Record consent

Make the consent process easy and clear, using the right language and graphics or audio where necessary.

The dangers of assuming consent

During research for this playbook, CLEAR Global heard from community members that they would consent to the use of artificial intelligence (AI). Their main concerns with AI were that they would be unable to use it or that it would replace the need for human work and further exclude many people in vulnerable communities. Most of the people we talked with were unaware of other risks associated with AI, including data protection and privacy issues. Their consent to the use of AI-enabled technology would therefore not be fully informed.

This highlights the point that the process of collecting genuinely informed consent cannot be neglected in favor of other considerations. Aid organisations and their technology partners are responsible for ensuring that individuals are aware of the risks and understand what they consent to.

Meet the community: the personas

Personas are fictional but research-based representations of real people, designed to capture the diverse experiences, needs, and challenges of those interacting with technology tools. In humanitarian settings, using personas can help bridge the gap between technological design and real-world application by ensuring that solutions are developed with a deep understanding of users’ contexts.

The use of personas serves several key purposes:

Enhancing empathy and understanding: Personas bring the voices of affected people into the design process, ensuring that digital tools are developed with a user-first mindset.

Highlighting barriers and needs: Different groups face distinct challenges related to knowledge of digital technology, trust, accessibility, and data protection. Personas illustrate these differences and help identify potential roadblocks.

Facilitating inclusive design: By representing a range of users, including those who may be marginalised, personas encourage solutions that are accessible, culturally appropriate, and responsive to diverse needs.

Encouraging meaningful engagement: Personas help shift the focus from technology-driven to community-driven approaches, fostering deeper engagement with the intended users.

Community personas

CLEAR Global spoke with 159 people in Borno and Adamawa States in northeast Nigeria in focus group discussions and ideation workshops. In these participatory sessions crisis-affected people shared their perspectives and concerns regarding the use of new digital technology in northeast Nigeria and whether and how they want to participate in the choice, design, deployment and evaluation of humanitarian technology applications and platforms.

While this playbook features research from Nigeria, its core approaches can be used worldwide with adaptations for local circumstances and infrastructure and offer a framework for designing ethical, effective, and human-centered technology in all humanitarian responses.

Maimuna

I’m Maimuna, aged 53, I’m married and have five children. I speak Kanuri and Hausa, but I don’t know how to read or write. I rely on my husband, the community leader, and the women’s leader for information on the help that may be available to us.

How I use and perceive technology

I don’t use technology in my daily life and I don’t have a phone. But I own a SIM card, which I put in my neighbour’s phone when I need to make calls, but most of the time I don’t have the money to buy phone credit. Our family uses one of those chip cards the humanitarians gave us to receive aid, but my husband is in charge of this. When they introduced the chip cards a few years ago, I was very sceptical because people said it was evil. Some people also say that women shouldn’t use technology and the Internet because of the risk of fraud and because there is dangerous content. I wouldn’t know how to protect myself from that.

Should humanitarians deploy technology?

It depends. Technology used by humanitarians, for example when we register for help, has made it much faster for us to receive aid, so that’s a good thing! And smartphone owners use voice-based applications that also could help me. But if humanitarians use more technology, what will happen to people like me who can’t afford a device and don’t know how to use technology? Will we be excluded? I’m worried!

Fatima

I’m Fatima, aged 24. I graduated secondary school and do not have a job now. I speak Fulani and Hausa, and read and write English. I prefer written information in English as I’m not used to reading or writing in Fulani or Hausa. I get information on our situation from my parents and friends.

How I use and perceive technology

I have a small phone for making calls. I would really like to have a smartphone, but I can’t afford it. Some of my friends own smartphones and I ask them to look up information for me on the Internet. We also watch TikTok and other social media, or use the phone to listen to the radio. I don’t use any other technology apart from my phone, and I haven’t heard of humanitarians making any technology available for us to use.

Should humanitarians deploy technology?

Yes, if it helps us. Technology is a good thing and we need more of it. If they use technology, humanitarians should also teach us how to use it safely so that it makes our lives better. We really need that! When it comes to new technology like this artificial intelligence everyone is talking about, I’m not sure because I don’t know anything about it. If it helps us and is in line with our religion then yes, but if you know there is a risk, then don’t ask us to use it. Whatever tools you come up with, I’d only use them if our bulama (leader) approves of them. I’m curious!

Idris

I’m Idris, aged 19. I’m a student. My first language is Marghi, but in my daily life I speak Hausa. I read and write very well in English. I regularly attend the meetings with the bulama to get information on our situation; I also read information shared in WhatsApp groups, and I search the Internet as well.

How I use and perceive technology

I own a smartphone and browse the Internet daily for my studies. I also use artificial intelligence applications like ChatGPT. I use WhatsApp a lot with friends and I’m a member of several WhatsApp groups. When I install new apps, I try to check the source to make sure the app is not a fake. Technology is great, it’s everywhere in the world today, and the Internet connects me to things happening elsewhere. Unfortunately, some people use technology and the Internet for fraud and other bad purposes, or to look at inappropriate content.

Should humanitarians deploy technology?

Yes, absolutely! It will help our community to advance and it will make humanitarian aid more efficient. I personally won’t face any issues using technology, and if it’s something new, you can show us how to use it. I’m excited!

Zainab

I’m Zainab, aged 39. I’m married with two children. I speak Shuwa Arabic, Hausa and English. I used to volunteer for a humanitarian organisation, helping mostly with data collection. I now work for the organisation as a community engagement support officer. I get information directly from humanitarians, the Internet and the media.

How I use and perceive technology

I own a smartphone that I also used for data collection as a volunteer (Kobo and ODK Collect). I have basic knowledge of how to use a computer, but I don’t need it very often. Technology is a game changer. Even if someone can’t read or write, if you show them how, they can use a device.

Should humanitarians deploy technology?

Yes, especially for women. We’re not tech-savvy, but we want women to be enlightened and build their knowledge and be included. We currently have “old” knowledge; we need more knowledge and we need to be engaged. Anything that is progressive to us and that will help our community is accepted. I’m supportive!

Adamu

I’m Adamu, aged 50. I’m married to two wives and have eight children. I’m the bulama, the elected leader of this community. I speak Shuwa Arabic, Hausa and Kanuri, and I know some Gamargu and English. I feel most confident reading and writing in Hausa. I get information on our situation from the humanitarians on an almost daily basis, and I act as the link between them and community members. I also support humanitarians of all organisations to implement their projects in our community.

How I use and perceive technology

I recently bought a smartphone, but I haven’t mastered it fully yet. I mostly use it for making calls and using WhatsApp. Lately I have joined some video calls. I also use my smartphone to browse the Internet if I’m looking for information. Technology has its advantages and disadvantages, but the advantages prevail. You can trace thieves; you can retrieve information that was stored 10 years ago and have it shown to you live. Technology is good, it’s transparent. Technology is better than text in books, because books can decay. The disadvantage, especially with AI, is that it takes away jobs, and that will make it difficult for the younger generation to build a livelihood. However, some technology is gradually reaching us. We want to have it here rapidly to use and benefit from it.

Should humanitarians deploy technology?

Yes, we’re ready for it and our community has the capacity to support it. We trust the organisations we’ve been working with over the last decade, so let’s sit down and discuss what you have to propose, the benefits and risks, and the impact it will have on our community. I’m attentive!

This study examines six pilot projects led by three prominent international NGOs across four countries to identify obstacles to innovation, adoption, and scalability that may arise in this context.

The Kaya learning platform describes this free course as a "collection of tools and practices that combines crowdsourced data gathered from affected communities and frontline responders with artificial intelligence (AI) for more effective crisis mitigation, response or recovery." It contains four modules that take approximately 20 minutes each to complete.

Live discussion from CDAC Network's panel at HNPW Conference 2025. Brings together a number of organisations in the tech-humanitarian space who are leading on harnessing AI in a way that puts people affected by crisis at the centre of these new technological developments.

Guidelines on ethical AI and data security

ICRC: The ICRC’s artificial intelligence policy is anchored in a purely humanitarian approach driven by our mandate and Fundamental Principles. It is meant to help ICRC staff learn about AI and safely explore its humanitarian potential.

ICRC: Protecting individuals' personal data is an integral part of protecting their life, integrity and dignity. Therefore, personal data protection is of fundamental importance for humanitarian organizations.

Use of biometrics and safeguarding policies

ICRC: As new technology provides new opportunities for the organisation to use biometrics in different contexts, the ICRC has adopted a dedicated Biometrics Policy designed to facilitate their responsible use and address the data protection challenges this poses.

Oxfam, Policy and practice: Biometrics is the measurement of human characteristics through technology such as iris scanning, facial recognition and fingerprint scanning.

Potential risks of using mobile technology to support migrants: Digital innovation has been one of the defining responses to record global humanitarian displacement and particular crises.

Community engagement and AI. At the recent World Summit AI, CDAC’s Executive Director, Helen McElhinney, made the case for deeper community participation in the development and use of artificial intelligence (AI) technologies in humanitarian settings.

Opportunities for emerging technology:

The design process